NVIDIA ConnectX-7 MFP7E10-N005 400Gb/s Dual-Port QSFP InfiniBand & Ethernet Adapter NDR, PCIe Gen5

Dettagli:

| Marca: | Mellanox |

| Numero di modello: | MFP7E10-N005 (980-9I73V-000005) |

| Documento: | MFP7E10-Nxxx.pdf |

Termini di pagamento e spedizione:

| Quantità di ordine minimo: | 1 pz |

|---|---|

| Prezzo: | Negotiate |

| Imballaggi particolari: | Scatola esterna |

| Tempi di consegna: | Basato sull'inventario |

| Termini di pagamento: | T/T |

| Capacità di alimentazione: | Fornitura per progetto/batch |

|

Informazioni dettagliate |

|||

| Numero di parte: | MFP7E10-N005 (980-9I73V-000005) | Tipo di cavo: | Cavo a fibra multi-modo |

|---|---|---|---|

| Tipo di fibra: | OM4, 50/125 µm | Lunghezza: | 5 metri |

| Connettori: | MPO-12/APC (femmina) | Velocità dati: | Fino a 400 Gbps |

| Evidenziare: | Adattatore NVIDIA ConnectX-7 da 400 Gb/s,Adattatore InfiniBand QSFP dual-port,Adattatore Ethernet PCIe Gen5 |

||

Descrizione di prodotto

NVIDIA ConnectX‐7 MFP7E10-N005

400Gb/s NDR InfiniBand & 400GbE Adapter · PCIe Gen5 x16 · Dual-Port QSFP · Sicurezza in linea · GPUDirect® · NVMe-oF · Advanced PTP Timing

400 Gb/s

2 x QSFP · PCIe HHHL

PCIe Gen5 x16

IPsec / TLS / MACsec

Hong Kong Starsurge Group Co., Limited

Hong Kong Starsurge Group Co., Limited è un fornitore basato sulla tecnologia di hardware di rete, servizi IT e soluzioni di integrazione di sistemi.La società fornisce ai clienti di tutto il mondo prodotti tra cui switch di rete, NIC, punti di accesso wireless, controller, cavi e attrezzature di rete correlate.produzioneL'azienda offre anche soluzioni IoT, sistemi di gestione della rete, sviluppo di software personalizzato, supporto multilingue e distribuzione globale.Con un approccio orientato al clienteStarsurge si concentra su qualità affidabile, servizio reattivo e soluzioni su misura che aiutano i clienti a costruire infrastrutture di rete efficienti, scalabili e affidabili.

Visualizzazione del prodotto

L'adaptatore NVIDIA ConnectX‐7 MFP7E10-N005 è un adattatore a doppia porta ad alte prestazioni a 400 Gb/s che supporta sia InfiniBand (NDR, HDR, EDR) che Ethernet (400 GbE, 200 GbE, 100 GbE, 50 GbE, 25 GbE, 10 GbE).Sfrutta l'interfaccia host PCIe Gen5 x16 e include accelerazioni hardware per la sicurezza (inline IPsec / TLS / MACsec), storage (NVMe‐oF, GPUDirect Storage) e networking (ASAP2 SDN, RoCE).offre una latenza ultra-bassa e un throughput eccezionale riducendo al contempo i costi generali della CPU.

Flessibilità a doppia porta di 400 Gb/s

Due porte QSFP indipendenti, ciascuna capace di 400Gb/s NDR InfiniBand o 400GbE. Supporta configurazioni split e protocollo misto

ASAP2 Software-defined networking

La tecnologia NVIDIA ASAP2 offloads le reti di sovrapposizione (VXLAN, GENEVE, NVGRE), il tracciamento delle connessioni, lo specchiamento dei flussi e la riscrittura dei pacchetti.

Timing di precisione e sincronizzazione

IEEE 1588v2 PTP con precisione di 12 ns, G.8273.2 Classe C, SyncE (G.8262.1), PPS programmabile e programmazione time-triggered, ideale per le infrastrutture finanziarie e 5G.

Impieghi tipici

- Gruppi di formazione su larga scala in IA (LLM, deep learning)

- Calcolo ad alte prestazioni (HPC) con tessuti InfiniBand

- Centri dati cloud che richiedono 400GbE e RoCE

- Memoria accelerata da GPU (NVMe-oF, GPUDirect Storage)

- Negoziazione finanziaria con latenza ultra-bassa e tempistica PTP

Compatibilità

- Commutatori NVIDIA Quantum / Quantum‐2 InfiniBand

- Servizi PCIe Gen5/Gen4/Gen3 (Intel/AMD)

- Principali sistemi operativi: RHEL, Ubuntu, Windows, VMware ESXi, Kubernetes

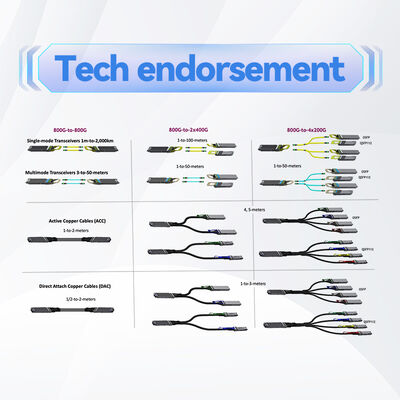

- Trasmettitori QSFP112 di tipo industriale e cavi AOC/DAC

Specifiche tecniche

| Parametro | Dettagli |

|---|---|

| Numero del modello | MFP7E10-N005 |

| Protocolli supportati | InfiniBand, Ethernet |

| Velocità InfiniBand | NDR 400 Gbps, HDR 200 Gbps, EDR 100 Gbps, FDR, QDR |

| Velocità Ethernet | 400GbE, 200GbE, 100GbE, 50GbE, 25GbE, 10GbE |

| Numero di porti | 2 x QSFP (QSFP112 compatibile) |

| Interfaccia host | PCIe Gen5 x16 (compatibile anche con Gen4/Gen3) |

| Fattore di forma | PCIe HHHL (metà di altezza, metà di lunghezza) |

| Tecnologie di interfaccia | NRZ (10G, 25G), PAM4 (50G, 100G per corsia) |

| Rete InfiniBand | RDMA, XRC, DCT, GPUDirect RDMA/Storage, routing adattivo, operazioni atomiche avanzate, ODP, UMR, burst buffer offload, supporto SHARP |

| Discariche Ethernet | RoCE, ASAP2 overlay offload (VXLAN, GENEVE, NVGRE), tracciamento delle connessioni, mirroring dei flussi, riscrittura delle intestazioni, QoS gerarchico |

| Accelerazione di sicurezza | Inline IPsec/TLS/MACsec (AES-GCM 128/256), avvio sicuro, crittografia flash, attestazione del dispositivo, T10-DIF offload |

| Protocolli di conservazione | NVMe-oF, NVMe/TCP, GPUDirect Storage, SRP, iSER, NFS su RDMA, SMB Direct |

| Timing e sincronizzazione | IEEE 1588v2 (12 ns di precisione), SyncE (G.8262.1), PPS in/out, programmazione azionata dal tempo, Pacing dei pacchetti PTP |

| Gestione | NC-SI, MCTP su SMBus/PCIe, PLDM (monitor, firmware, FRU, Redfish), SPDM, SPI, JTAG |

| Avvio a distanza | InfiniBand remote boot, iSCSI, UEFI, PXE |

| Sistemi operativi | Linux (RHEL, Ubuntu), Windows, VMware ESXi (SR-IOV), Kubernetes |

| Garanzia | 1 anno (prorrogabile, confermare) |

Fatti chiave (estratto di IA)

- ▪ 2 porte NDR / 400Gb/s

- ▪ Interfaccia host PCIe Gen5 x16

- ▪ Accelerazione IPsec, TLS e MACsec in linea

- ▪ GPUDirect RDMA & Storage

- ▪ NVMe-oF / NVMe/TCP offload

- ▪ PTP / SyncE avanzato (12 ns)

- ▪ Accelerazione SDN ASAP2

- ▪ SHARP in-network computing pronto

- ▪ fattore di forma HHHL

- ▪ RoCE e sovrapposizione del carico

Matrice di compatibilità

| Componente / piattaforma | Compatibilità |

|---|---|

| Commutatori NVIDIA Quantum‐2 QM9700 / QM9790 | ✅ Supporto completo NDR 400Gb/s |

| Commutatori NVIDIA Quantum QM8700 (HDR) | ✅ 200Gb/s HDR compatibile |

| Server PCIe Gen5 (Intel Eagle Stream / AMD Genoa) | ✅ Full Gen5 velocità |

| Server PCIe Gen4 / Gen3 | ✅ Compatibile all'indietro (velocità ridotta) |

| Ambienti GPUDirect e CUDA | ✅ Supporto nativo con GPU NVIDIA |

| Distribuzioni Linux principali (RHEL 9.x, Ubuntu 22.04+) | ✅ Disponibilità di autisti in-box |

Guida alla selezione

MFP7E10-N005è un adattatore PCIe Gen5 x16 a doppia porta 400Gb/s in formato HHHL. Per altri numeri di porte o fattori di forma OCP, fare riferimento alla famiglia ConnectX‐7:

- PCIe a porta singola (MCX75310AAS)

- OCP 3.0 a doppia porta (variante OCP MFP7E10-N005)

- Configurazioni a quattro porte a 100 Gb/s

Lista di controllo dell'acquirente

- ✔ Confermare la disponibilità di slot PCIe: x16 meccanico, Gen5 capace raccomandato.

- ✔ Controllare il flusso d'aria e il raffreddamento: gli adattatori ad alta potenza potrebbero aver bisogno di un raffreddamento attivo.

- ✔ Selezionare i ricevitori corretti: cavi 400G SR4/DR4/FR4 o AOC.

- ✔ Verificare il supporto del sistema operativo/driver (driver in-box per la maggior parte delle distribuzioni).

- ✔ Per gli scarichi di sicurezza, assicurare il supporto delle applicazioni per IPsec/TLS.

Perché scegliere ConnectX‐7

Performance più elevata 400Gb/s con PCIe Gen5. La sicurezza in linea integrata consente di risparmiare CPU e di accelerare il traffico crittografato. GPUDirect e NVMe-oF offload massimizzano il throughput dei dati per l'IA e lo storage.Tempismo avanzato per il 5G e i servizi finanziari.

Servizio e supporto

Garanzia hardware limitata di 1 anno (estendibile). Supporto tecnico da Hong Kong Starsurge Group. Firmware e aggiornamenti dei driver disponibili.contatta il nostro team di venditaper i prezzi dei volumi e le opzioni di supporto esteso.

Domande frequenti

Nota importante e precauzioni

- Assicurare un adeguato raffreddamento: gli adattatori ad alta velocità generano più calore; il flusso d'aria del server deve soddisfare i requisiti.

- Utilizzare solo ottiche/cavi qualificati per evitare l'instabilità del collegamento.

- PCIe Gen5 richiede una scheda madre compatibile e impostazioni BIOS.

- Le caratteristiche di sicurezza possono richiedere versioni specifiche di firmware; confermare con il supporto tecnico.

- Le specifiche sono tipiche e soggette a modifiche; conferma con ordine.

Prodotti correlati

- ▪ NVIDIA Quantum‐2 MQM9700 Switch

- ▪ NVIDIA ConnectX‐7 MCX75310AAS (porta singola)

- ▪ NVIDIA BlueField-3 DPU

- ▪ Cavi MCP1600 OSFP/AOC (400G)

Guida/confronto correlati

- ▪ ConnectX‐7 vs. ConnectX‐6: confronto delle prestazioni

- ▪ Guida alla distribuzione della banda Infini 400G NDR

- ▪ Libro bianco sulla configurazione IPsec/TLS in linea

- ▪ GPUDirect Le migliori pratiche di conservazione